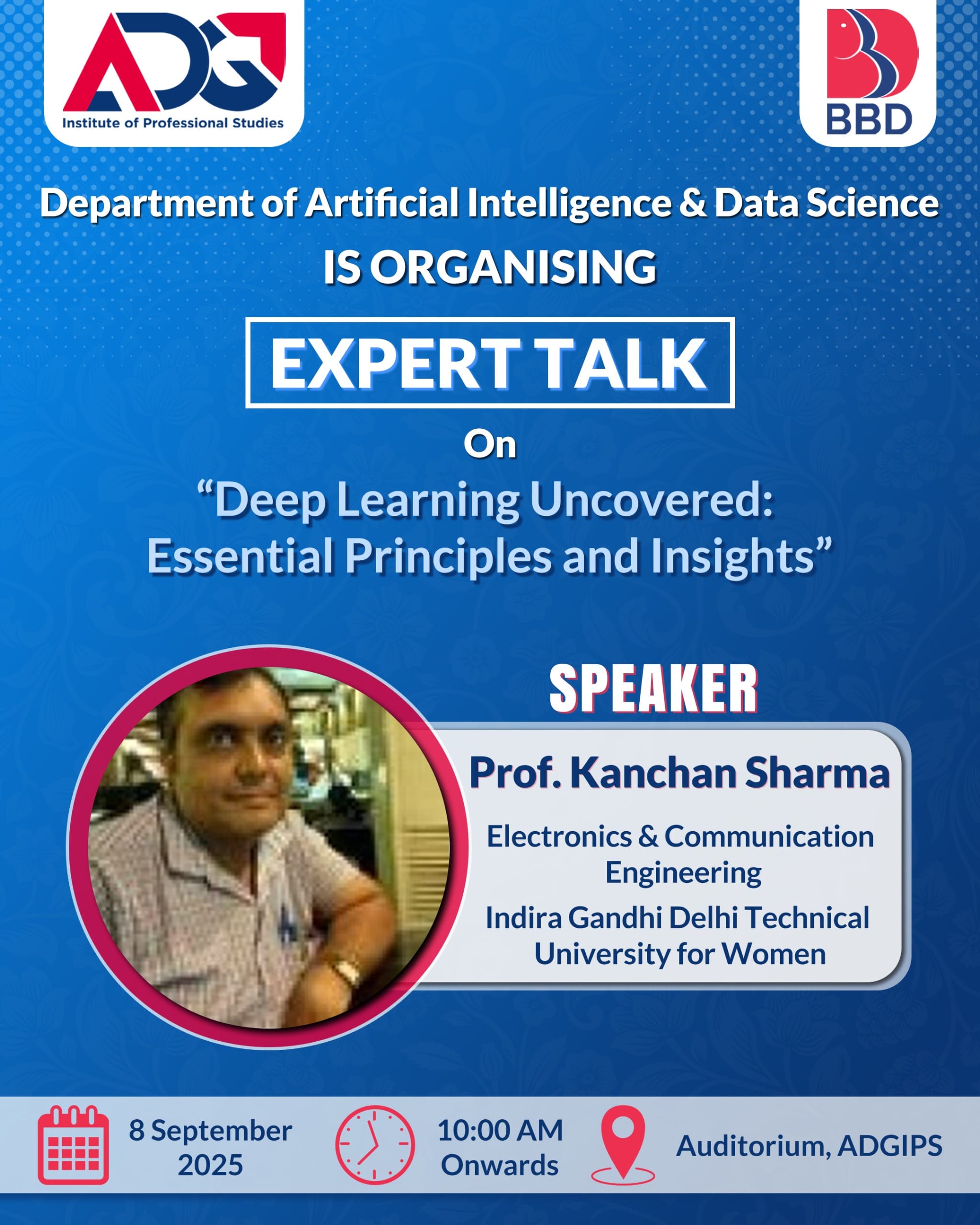

Deep Learning Uncovered: Essential Principles and Insights

Organized By: Department of Artificial Intelligence & Data Science

Speaker: Prof. Kanchan Sharma, Indira Gandhi Delhi Technical University for Women (IGDTUW)

Date & Time: 8th September 2025, 10:00 am onwards

Venue: Auditorium, Dr. Akhilesh Das Gupta Institute of Professional Studies

1. Introduction

This report summarizes the expert talk delivered by Prof. Kanchan Sharma on the topic “Deep Learning Uncovered: Essential Principles and Insights”. The session was organized for students and faculty of the AI & Data Science (AI&DS) department at Dr. Akhilesh Das Gupta Institute of Professional Studies.

Prof. Sharma presented a balanced mix of foundational theory, practical guidance, research perspectives, and career advice relevant for undergraduate and postgraduate students pursuing machine learning and deep learning.

2. Objectives of the Talk

- To demystify core concepts underpinning modern deep learning.

- To connect theoretical building blocks with practical modelling strategies.

- To highlight recent trends, common pitfalls, and best practices in model development and deployment.

- To provide research and career guidance to students interested in deep learning.

3. Speaker Profile (Brief)

Prof. Kanchan Sharma is a faculty member at Indira Gandhi Delhi Technical University for Women (IGDTUW). She has a strong academic and research background in machine learning and deep learning, with several publications and student-guided projects in related areas.

Prof. Sharma is known for making complex topics approachable and for guiding students toward research and industry-relevant projects.

4. Summary of the Presentation

4.1 Foundations and Intuition

Prof. Sharma began with a refresher on the building blocks of neural networks: neurons, activation functions, loss functions, and optimization. She emphasized the intuition behind commonly-used activation functions (ReLU, Sigmoid, Tanh) and why activation nonlinearity is essential for representation learning.

4.2 Architectural Patterns

She reviewed canonical architectures and their application domains:

- Feedforward Networks (MLPs): Tabular and simple pattern tasks

- Convolutional Neural Networks (CNNs): Vision tasks, local receptive fields, parameter sharing

- Recurrent & Transformer-based Models: Sequence modeling, attention mechanisms, and why transformers replaced classical RNNs in many cases

- Autoencoders & Generative Models: Representation learning and generation (VAEs, GANs)

4.3 Training Dynamics & Optimization

Key practical components discussed:

- Gradient Descent Variants: SGD, Momentum, Adam — their trade-offs

- Regularization Techniques: Weight decay (L2), dropout, data augmentation, early stopping

- Normalization Strategies: Batch normalization, layer normalization

- Learning Rate Schedules: Cyclical learning rate, warm restarts

4.4 Practical Model Building

Prof. Sharma shared an end-to-end workflow:

- Problem framing

- Data collection and cleaning

- Exploratory data analysis

- Model selection and hyperparameter tuning

- Validation strategies (cross-validation vs. holdout)

- Final evaluation

She also stressed reproducibility through seed control, environment management, and experiment tracking.

4.5 Interpretability, Robustness & Ethics

The talk included discussion on:

- Model interpretability (saliency maps, SHAP, Integrated Gradients)

- Robustness to distribution shifts and adversarial examples

- Ethical considerations such as bias, fairness, and responsible data handling

4.6 Research Directions & Industry Trends

Prof. Sharma highlighted key trends:

- Self-supervised learning

- Foundation models

- Efficient inference (quantization, pruning)

- Multimodal learning

She encouraged students to explore research papers and work on reproducible projects.

4.7 Career Guidance

Practical advice for students:

- Build a strong portfolio with 2–3 well-documented projects

- Contribute to open-source or reproduce research papers

- Strengthen fundamentals in linear algebra, probability, and optimization

- Pursue internships and faculty collaborations

5. Key Takeaways for AI&DS Students

- Understanding beats memorization: Focus on concepts, not just tools

- Data matters more than model size: Quality data leads to better performance

- Experiment systematically: Change one parameter at a time and track results carefully